Over the past two years, few statistics have captured executive attention quite like the widely cited Massachusetts Institute of Technology finding that the majority of AI pilots fail to scale. The number has been repeated in boardrooms and strategy sessions across industries, often accompanied by a familiar diagnosis: the data wasn’t ready.

There is truth in that explanation. Poor data quality, fragmented data estates and weak governance frameworks can undermine trust in AI systems. If models are trained on incomplete or inconsistent data, their outputs will inevitably disappoint. As a result, many organisations have responded by investing heavily in data lakes, modern data platforms and stewardship programmes.

Yet even among enterprises that have made significant progress on data maturity, a stubborn reality remains. Promising pilots still stall. Intelligent prototypes still struggle to move beyond controlled environments. AI delivers insight in isolation but not impact at scale. The reason, I would argue, lies not primarily in data but in integration.

The Scaling Gap that Needs Attention

Most AI pilots are developed in relatively contained settings. Whether it is a traditional machine learning model or a large language model (LLM), the system is trained, fine-tuned, or prompted and validated in isolation. It may generate recommendations, summaries, predictions, or natural-language responses that appear highly compelling in demonstrations. But scaling is not about producing insight; it is about operationalising it.

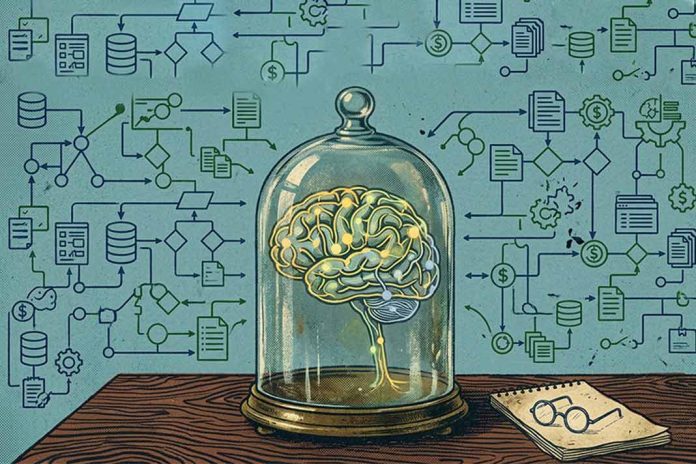

For AI to create enterprise value, it must move beyond analysis and into execution. It must trigger workflows, update systems of record, coordinate across departments and operate within real business processes. In other words, intelligence must be connected to the enterprise nervous system.

This is where many initiatives falter. Enterprises often discover that their AI models cannot easily access the systems they need, cannot act within existing workflows, and cannot reliably orchestrate actions across fragmented applications. The issue is not that the models are incapable. It is that the integration fabric required to embed them into the business is either brittle, siloed or incomplete.

A near-singular focus on data risks obscuring this deeper structural constraint. Data determines what an AI system can know. Integration determines what it can do.

From Pilots to the Agentic Enterprise

As AI capabilities evolve, so does the enterprise ambition. We are moving from narrow AI assistants towards what many describe as the Agentic Enterprise: an environment in which AI agents do not merely respond to prompts, but participate autonomously in business processes.

These agents can analyse context, make decisions within defined parameters and trigger actions across multiple systems. They can qualify leads, reconcile transactions, route support cases or initiate onboarding workflows. They are not static tools; they are dynamic participants in operational flows.

However, agents cannot operate in a vacuum. Their effectiveness depends entirely on how well they are embedded into the enterprise architecture. An agent that cannot access a CRM, ERP or HR system is reduced to a conversational interface. An agent that cannot reliably orchestrate APIs or respond to real-time events becomes an isolated experiment.

The promise of the Agentic Enterprise is not simply automation at scale. It is coordinated intelligence, where human expertise and machine-driven decision-making reinforce each other across connected systems. And that coordination is only possible through robust integration.

ALSO READ: Forward-Looking Technical Debt: The Hidden Cost of AI Hesitation

Integration as the Connective Tissue of Intelligence

Historically, integration has been viewed as plumbing: essential but unglamorous middleware that connects applications and data sources. In the age of AI, this perception must change. Integration is no longer a background utility. It is the connective tissue that determines whether intelligence can flow across the organisation.

Consider what it takes for an AI agent to operate meaningfully. It must retrieve contextual data from multiple systems. It must act on that data by invoking APIs or triggering workflows. It must respond to events in real time. And it must do so reliably, securely and consistently across environments. Each of these capabilities rests on integration.

Without a mature integration layer, AI remains constrained to pockets of experimentation. With it, intelligence can be embedded directly into core processes, from customer engagement to supply chain management. Scaling AI, therefore, is less about building more sophisticated models and more about ensuring that those models can interact seamlessly with the enterprise ecosystem.

The Evolution of the Integration Layer

For this to happen, integration itself must evolve. Traditional integration focused on connecting applications and moving data between systems. Modern integration must do more. It must orchestrate agents alongside APIs. It must support event-driven architectures where actions are triggered dynamically. It must enable composable components that can be reused across workflows. And it must provide a coherent way to manage interactions between humans, applications and intelligent agents.

This evolution transforms the integration platform into an orchestration layer for digital intelligence. It becomes the environment where APIs, events, workflows and AI capabilities converge into a unified operational fabric.

In practical terms, this means empowering integration teams to extend their expertise into AI-driven scenarios. The skills required to design robust APIs, manage service interactions and ensure reliable system connectivity are now central to enabling agentic collaboration.

When integration is treated as a strategic capability rather than an afterthought, AI can move from experimentation to enterprise-wide impact.

A Strategic Reframing for CIOs

For CIOs and IT leaders, the reframing is straightforward but significant. The critical question is no longer simply whether the organisation’s data is ready for AI, but whether the integration architecture is capable of operationalising intelligence at scale. Are core systems exposed through well-governed APIs? Can workflows be orchestrated dynamically across business domains? Is there a cohesive layer that enables agents to interact reliably and predictably with enterprise systems?

Organisations that confront these questions head-on will find that scaling AI becomes a matter of architecture, not luck. Those that do not may continue to run impressive pilots that never quite reach production. Intelligence without integration is potential, not performance. AI rarely fails for lack of sophistication; it fails because it cannot connect. In the modern enterprise, connection is strategy.

ALSO READ: Cloud 3.0 and Data Sovereignty: Why Workload Placement Is Now a Strategic Decision