At its flagship GTC 2026 event, NVIDIA announced that its new Vera Rubin platform has entered full production.

The platform integrates seven chips—Vera CPU, Rubin GPU, NVLink 6, ConnectX-9 SuperNIC, BlueField-4 DPU, Spectrum-6 Ethernet and Groq 3 LPU—designed to function as a single system across training and inference.

NVIDIA is positioning this as a shift from discrete GPU clusters towards fully integrated systems optimised for sustained utilisation and token throughput.

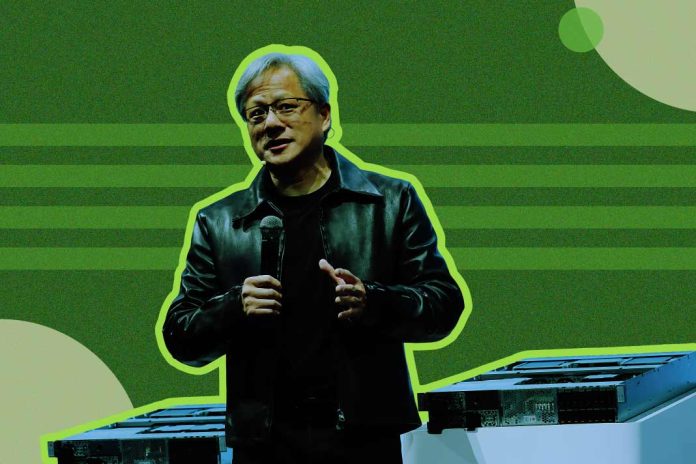

“Vera Rubin is a generational leap—seven breakthrough chips, five racks, one giant supercomputer—built to power every phase of AI,” said Jensen Huang, Founder and CEO of NVIDIA, in an announcement.

At the system level, NVIDIA is prioritising efficiency and density. Its NVL72 rack combines 72 GPUs and 36 CPUs with NVLink 6, targeting higher cluster utilisation and lower cost per token.

The company claims up to 10x higher inference throughput per watt and significantly reduced GPU requirements for mixture-of-experts training versus its previous generation.

A key addition is the Vera CPU, designed for reinforcement learning environments and orchestration layers around GPU workloads. It features 88 custom cores and high-bandwidth LPDDR5X memory, with NVIDIA claiming roughly 2x efficiency and 50% faster performance than conventional rack-scale CPUs.

The CPU is positioned as a central component in agentic AI systems, where large numbers of environments and tools must be run alongside model inference and training.

“The CPU is no longer simply supporting the model; it’s driving it,” Huang said.

The inclusion of the Groq 3 LPU reflects NVIDIA’s deeper push into inference, building on its December 2025 agreement with Groq. Under that deal, NVIDIA secured a non-exclusive license to Groq’s inference technology and brought key engineers into the company, in a transaction reportedly valued at around $20 billion.

That deal is now materialising in hardware: the Groq-based LPX rack integrates 256 LPU processors optimised for low-latency inference and large-context decoding, and is designed to work alongside NVIDIA GPUs in a split inference pipeline.

NVIDIA is effectively formalising a two-stage architecture—GPU-heavy prefill, and LPU-driven decode—to improve throughput and efficiency at scale.

“Enterprises and developers are using Claude for increasingly complex reasoning, agentic workflows and mission-critical decisions,” said Dario Amodei, CEO of Anthropic. “NVIDIA’s Vera Rubin platform gives us the compute, networking and system design to keep delivering while advancing the safety and reliability our customers depend on.”

Beyond compute, NVIDIA is disaggregating other bottlenecks as well. BlueField-4 STX introduces a dedicated KV-cache storage layer, while Spectrum-6 networking is designed for high-throughput east-west traffic across large clusters. Together, these components indicate a move toward tightly coupled, yet functionally specialised, infrastructure layers.

On the deployment side, NVIDIA introduced the Vera Rubin DSX reference design, standardising how AI factories are built and operated across compute, power, cooling and networking. Supporting software such as DSX Max-Q and DSX Flex is designed to optimise power allocation and integrate data centres with grid systems, addressing power constraints in large-scale deployments.

“In the age of AI, intelligence tokens are the new currency, and AI factories are the infrastructure that generates them,” Huang said.

The company is also extending its Omniverse platform to create digital twins of AI factories, allowing operators to simulate thermals, power usage and workload behaviour before physical deployment—effectively shifting infrastructure validation upstream.

Cloud providers, including Amazon Web Services, Google Cloud, Microsoft Azure and Oracle Cloud Infrastructure, along with OEMs such as Dell Technologies and Supermicro, are expected to deploy Vera-based systems. Model developers, including OpenAI, Meta and Mistral AI, are aligning around the platform for large-scale training and long-context inference.

NVIDIA said Vera Rubin systems will be available through partners in the second half of 2026.

ALSO READ: AWS, Cerebras Partner to Deliver Faster AI Inference